Three years ago, two lawyers in New York cited fake cases from ChatGPT in a court filing. They got fined $5,000. Last month, a lawyer in the same courthouse did the same thing. The judge entered default judgment. His client lost the case.

In between, more than 300 judges issued AI-specific orders, 20+ state bar associations published ethics opinions and formal guidance, Canada and the US both developed regulatory frameworks, and the documented instances of AI hallucinations in court filings crossed 300, with the pace of new cases accelerating sharply through 2025.

We tracked all of it. Here's what we found.

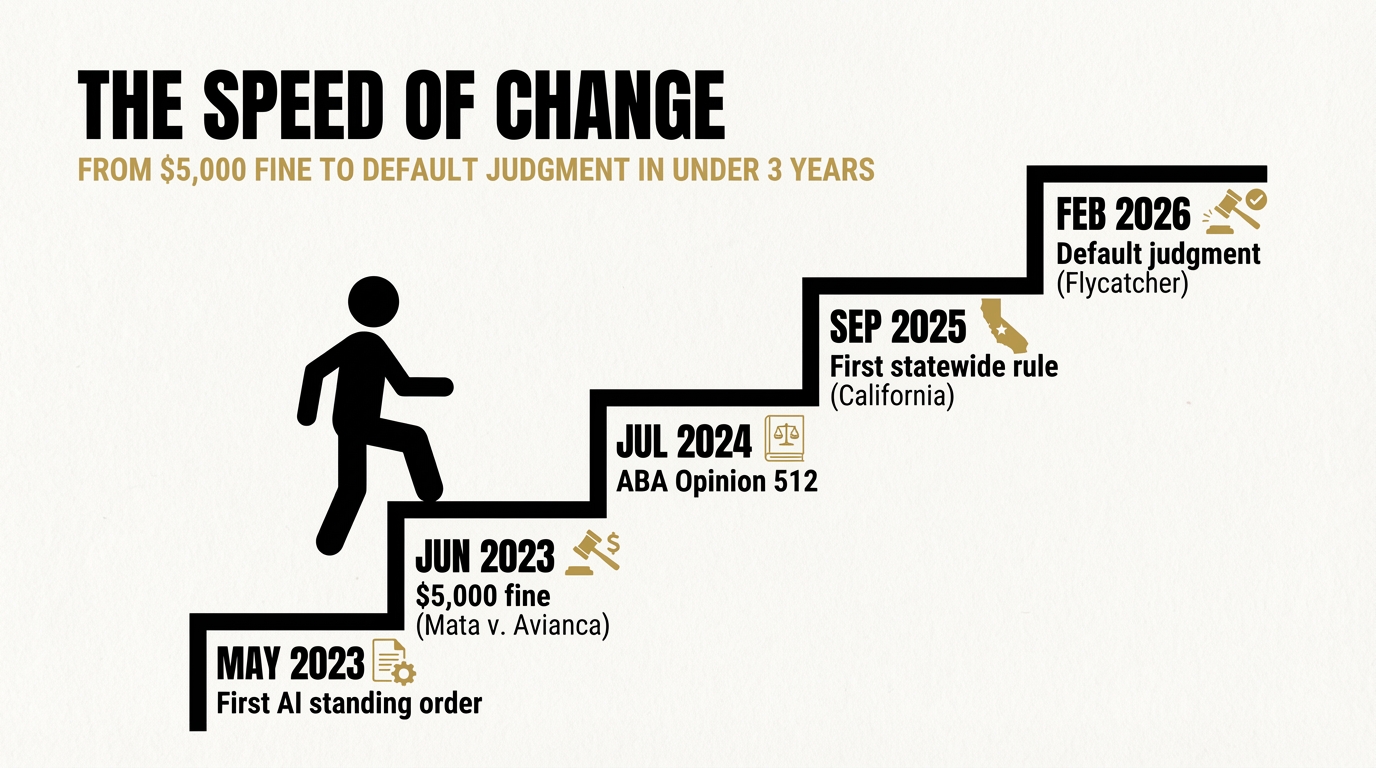

The speed of change

The timeline tells the real story.

May 2023: Judge Brantley Starr in the Northern District of Texas issues the first federal AI standing order. It requires every filer to certify whether AI was used.

June 2023: Mata v. Avianca is decided. $5,000 fine. The standing order movement begins.

July 2024: The ABA issues Formal Opinion 512, the first national ethics framework for AI in legal practice. Six duties: competence, confidentiality, communication, candour, supervision, fees.

September 2025: California becomes the first state to adopt a statewide court rule on generative AI (Rule 10.430), requiring any court that permits AI use by judicial officers or staff to adopt a formal use policy.

February 2026: Flycatcher v. Affable Avenue. Default judgment. A party loses the entire case because their lawyer repeatedly filed AI-hallucinated citations despite multiple court warnings.

In less than three years, the profession went from "should we worry about this?" to default judgments and one-year suspensions.

Five trends shaping the landscape

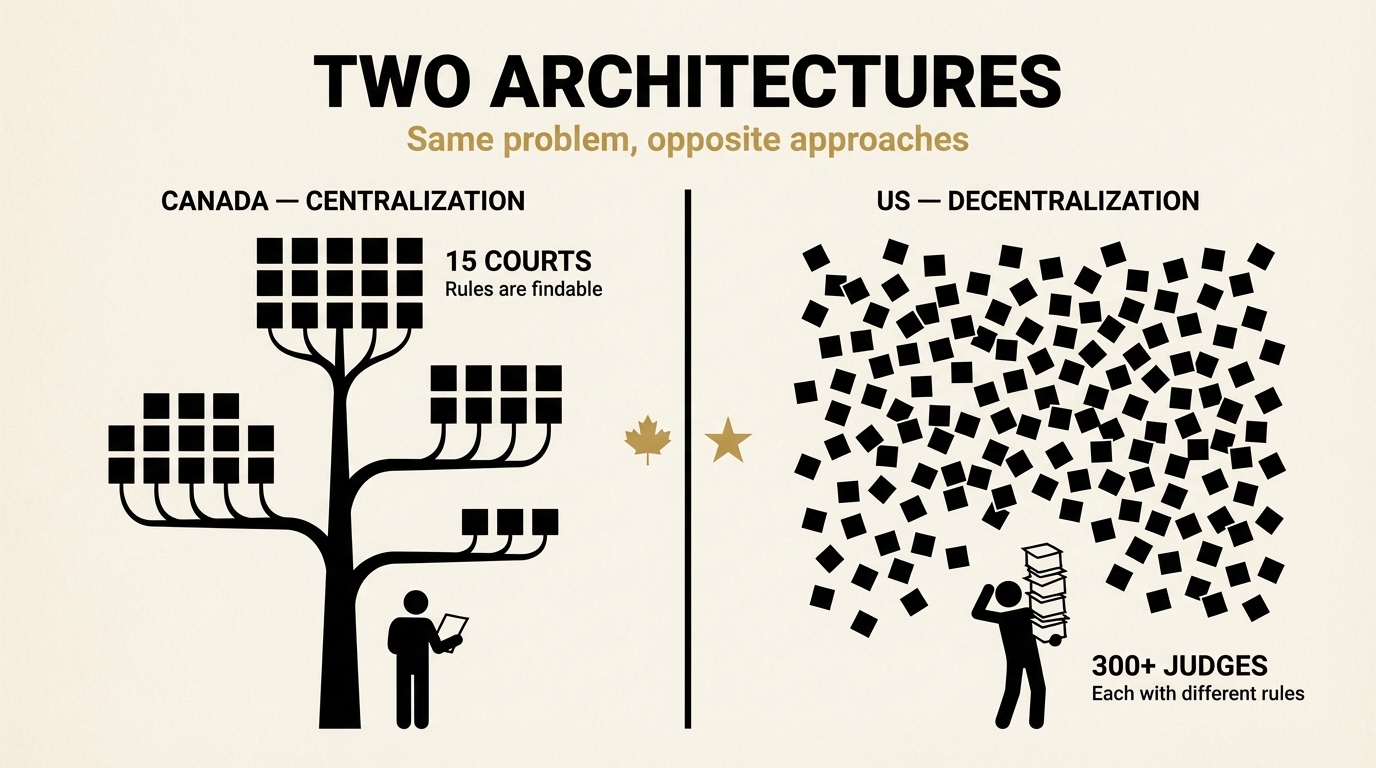

1. Two countries, two architectures

The US and Canada are both regulating AI use by lawyers. They're doing it in fundamentally different ways.

Canada chose centralization. The Federal Court of Canada issued one practice direction that applies nationally: if you file there, you declare AI use in the first paragraph. Period. Ontario, Manitoba, Yukon, and Nova Scotia all followed with court-wide directions. The Law Society of Ontario published guidance documents. The Canadian Judicial Council issued core guidelines. Roughly 15 courts and tribunals, multiple law societies, and national bodies have weighed in. The rules are findable.

The US chose decentralization. Over 300 individual judges have issued their own standing orders, each with different requirements. No uniform federal rule exists. The Fifth Circuit proposed one, then withdrew it. The result: a lawyer practicing in multiple jurisdictions has to check the standing orders of every judge they appear before. Two judges in the same courthouse can have different AI rules.

The practical implication: compliance is simpler in Canada and genuinely hard in the US.

2. Three regulatory tiers have emerged

Across both countries, courts are falling into three tiers, and the pattern is remarkably consistent:

Tier 1: Mandatory certification. You file a sworn certificate on the docket attesting to AI use or non-use. In the US, this is the Judge Starr model, adopted by 100+ federal judges. In Canada, the Federal Court requires a declaration in the first paragraph of any filing containing AI-generated content.

Tier 2: Mandatory disclosure. You must disclose AI use and identify the tool, but no formal certificate is required. Multiple judges in Northern District of Illinois and Northern District of California follow this approach. In Canada, Manitoba, Yukon, and Nova Scotia require disclosure of which tool was used and how.

Tier 3: Existing rules apply. No new AI-specific requirements. The Second Circuit, the Fifth Circuit, and Illinois state courts have explicitly taken this position. The Fifth Circuit proposed a certification rule, then decided against it, reasoning that Rule 11 is sufficient.

One outlier: Judge Boyko in the Northern District of Ohio bans AI use in filing preparation entirely. No Canadian court has gone this far.

3. Sanctions are escalating fast

The severity of penalties has increased dramatically in under three years.

| Year | Case | Penalty |

|---|---|---|

| 2023 | Mata v. Avianca | $5,000 fine |

| 2024 | Park v. Kim | Disciplinary referral |

| 2024 | Gauthier v. Goodyear | $2,000 + mandatory CLE |

| 2025 | Wadsworth v. Walmart | $5,000 total across three attorneys; lead attorney's pro hac vice revoked |

| 2025 | Noland v. Land of the Free | $10,000 + State Bar referral |

| 2026 | Flycatcher v. Affable Avenue | Default judgment |

Three things stand out:

The tools don't matter. ChatGPT, Claude, Gemini, Grok, and even a major firm's proprietary AI tool (Morgan & Morgan's MX2.law) have all produced hallucinated citations that led to sanctions. The Noland case involved four different AI tools. The problem is the workflow, not the product.

Big firms aren't immune. Wadsworth v. Walmart sanctioned two Morgan & Morgan attorneys and one from Goody Law Group. Morgan & Morgan is among the 40 largest US firms by headcount. Their in-house AI tool hallucinated eight out of nine cited cases.

Default judgment is now on the table. In Flycatcher, the court didn't just fine the lawyer. It ended the case. The client lost. That's a new ceiling for consequences that changes the risk calculation entirely.

Canada hasn't had its Mata v. Avianca moment yet. But the Federal Court reported only 3–4 AI disclosures in 28,000 filings in 2024. That means either nobody is using AI, or nobody is disclosing.

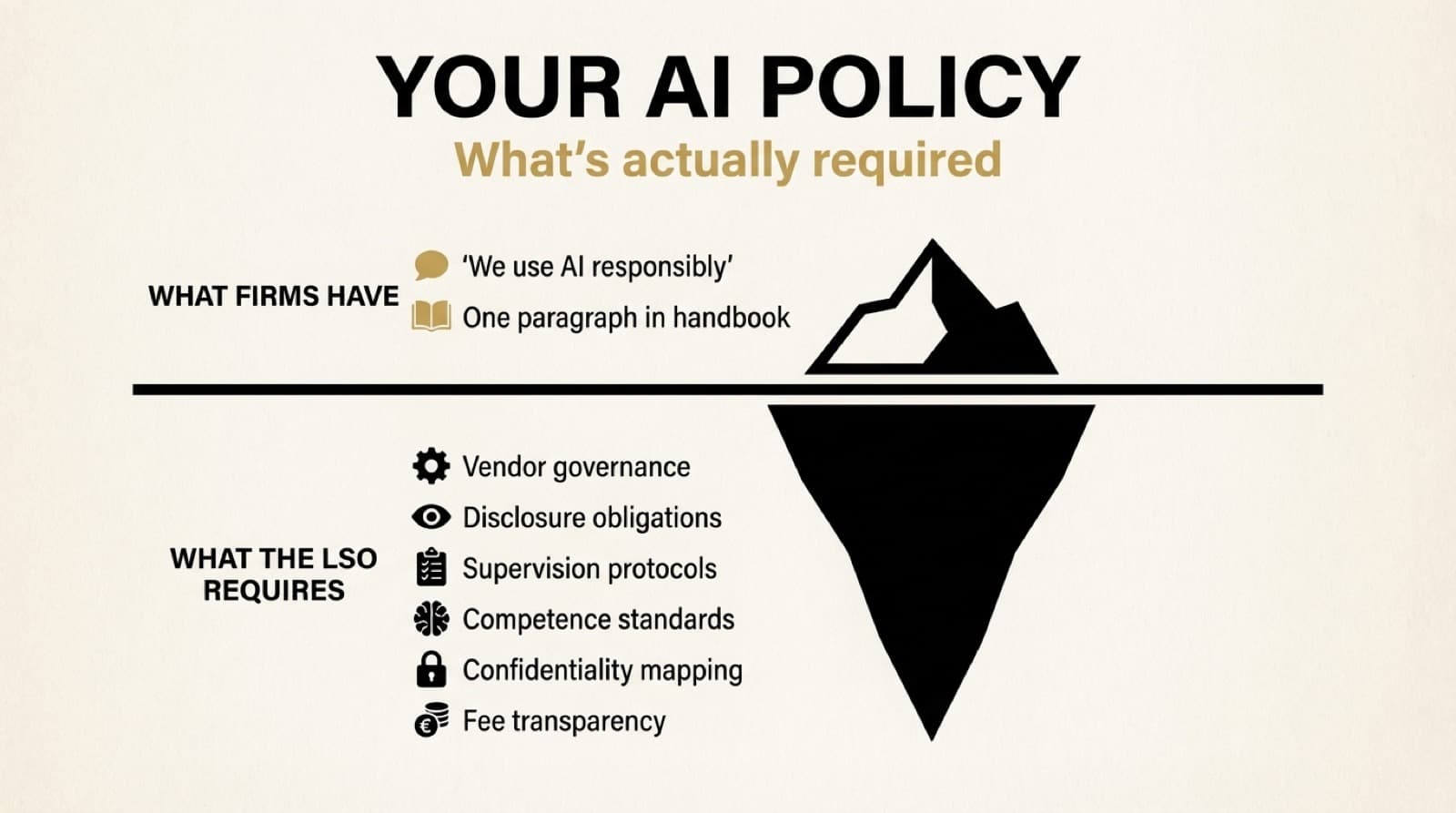

4. The ethics framework is converging

Despite different architectures, the US and Canada have landed on the same core obligations. Both the ABA (Formal Opinion 512) and the LSO (Practice Note on Professional Obligations) map to essentially the same six duties:

- Competence — understand the technology before you use it

- Confidentiality — don't put client data into tools that use it for training

- Supervision — treat AI output like work from an unsupervised junior

- Candour — disclose AI use when it impacts client interests or court filings

- Fees — you can't bill eight hours for two hours of AI-assisted work

- Verification — every citation, every quote, every case must be checked by a human

The convergence is striking. Oregon requires informed client consent before using open AI models. Pennsylvania says the same. North Carolina says to avoid inputting client-specific information into publicly available AI. The LSO says anonymizing isn't enough. Get informed consent.

The message across 20+ bar opinions and law society guidance documents: AI is a tool. You're responsible for everything it produces.

5. New rules are coming, and they're bigger than disclosure

The next wave goes beyond "did you use AI?" to "what happens when AI generates evidence?"

Proposed Federal Rule of Evidence 707 would create a Daubert-style standard for AI-generated evidence. If adopted, it would be the first uniform federal rule specifically addressing AI in the courtroom. The public comment period closed February 2026; the rule is now under review for adoption.

Ontario's O. Reg 384/24 already requires signed certification of the authenticity of every authority cited in a factum, applying to all parties regardless of whether AI was used. This is arguably more advanced than anything in the US: instead of asking about AI use, it simply requires you to verify your sources. Period.

California's Rule 10.430 is the first statewide court rule requiring courts that permit AI use by judicial officers or staff to adopt formal use policies by a set deadline. It governs courts' internal AI governance, not attorney filings. Not guidance. A rule.

The direction is clear: disclosure requirements were phase one. Evidentiary standards, verification mandates, and institutional policies are phase two.

What this means for your firm

If you practice in Canada, the rules are consolidated but the compliance gap is real. The LSO published guidance documents. Most firms haven't mapped them into an internal policy. The courts have practice directions. Most lawyers haven't read them.

If you practice in the US, the rules are scattered but the consequences are documented. 300+ judicial orders. Escalating penalties across hundreds of hallucination cases. No sign of slowing down.

If you practice cross-border, you're navigating the most complex AI compliance landscape in the legal profession. Two countries, two architectures, hundreds of individual requirements.

In all three scenarios, the firms that are acting now — drafting policies, training teams, setting review cycles — are building the infrastructure they'll need regardless of how the rules evolve.

The ones that aren't are running a risk that gets more expensive every month.

This post is based on our comprehensive research covering 16 Canadian courts and tribunals, 300+ US judicial orders, 20+ state bar ethics opinions and formal guidance, and the complete sanctions case law through March 2026.

- Canada: AI Practice Directions & LSO Compliance Framework — judgementlayer.com/resources

- United States: Court Orders, Bar Opinions & Sanctions Case Law — judgementlayer.com/resources

Subscribe to the JudgementLayer newsletter for weekly intelligence on AI governance in legal practice.